Normal map crazy bump cinema 4d

- #Normal map crazy bump cinema 4d how to#

- #Normal map crazy bump cinema 4d software#

- #Normal map crazy bump cinema 4d code#

In Cinema 4D if you choose Render->BakeObject you have only object space. I have successfully used it to apply bone weights and UV's across disimilar characters. Pretty close considering the topology and polycount were not that similar except it being a closed mesh. In the docs they show a sphere's UV's etc transfered to a cube. Just choose your set up model and drag the target object into the Target field and choose one of the algorythmic methods, what you want transferred and click. This will probably be VERY useful for future projects.Click to expand.Actually if you have the Mocca3 module you can transfer vertex maps, bone weights, selection sets, textures, morphs and UV's using VAMP.

#Normal map crazy bump cinema 4d how to#

That’s all for today! In the process I have learned about how Normal Maps work under the hood a little bit better, but also how to do GLSL like kernels in Core Image. I have a few new references and I’ll probably do a follow up, with a better, more accurate, and cleaner solution that I can Open Source.

#Normal map crazy bump cinema 4d code#

I do not plan to release the code online as it’s very dirty, but be sure to hit me up on Twitter if you want to take a look at it.

#Normal map crazy bump cinema 4d software#

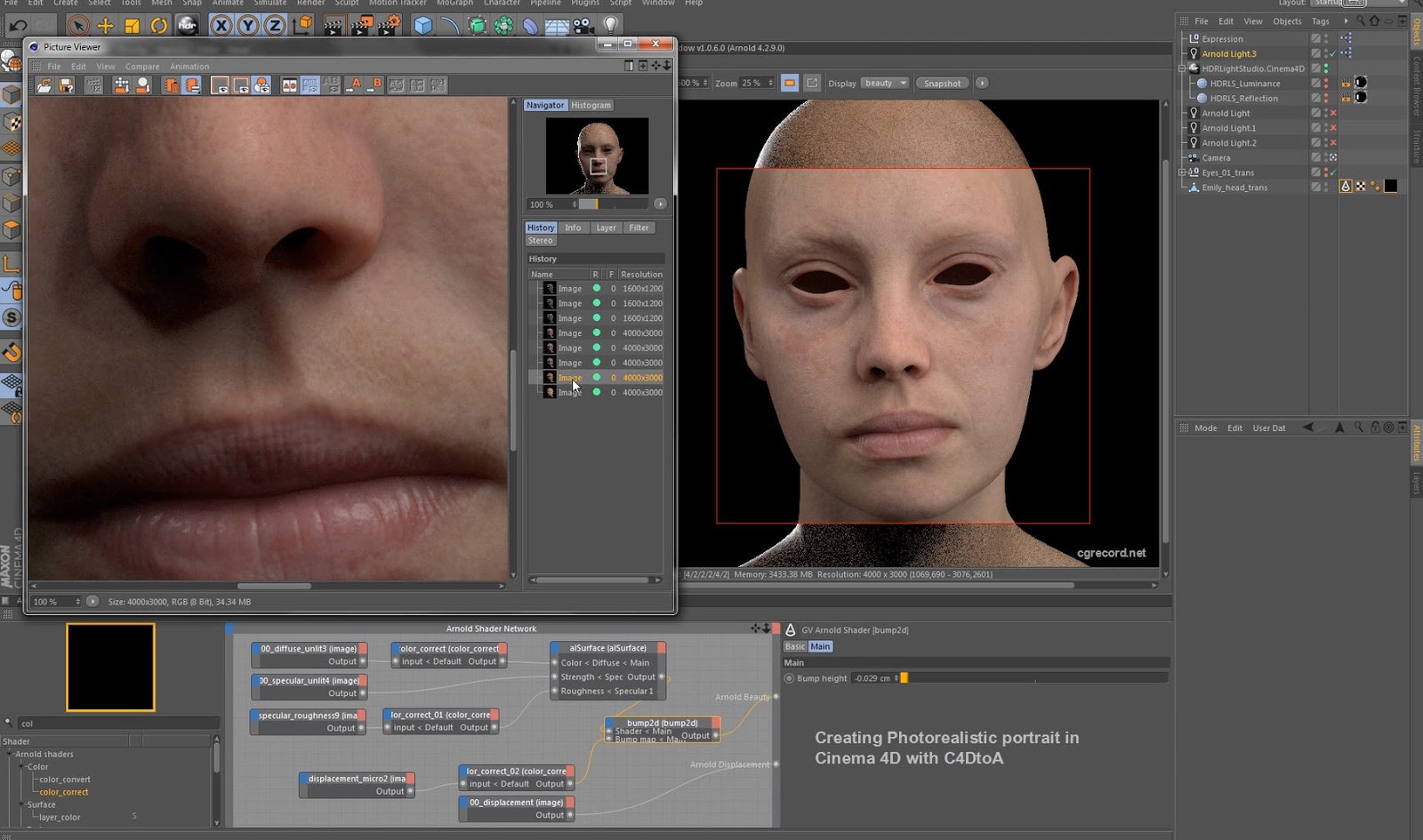

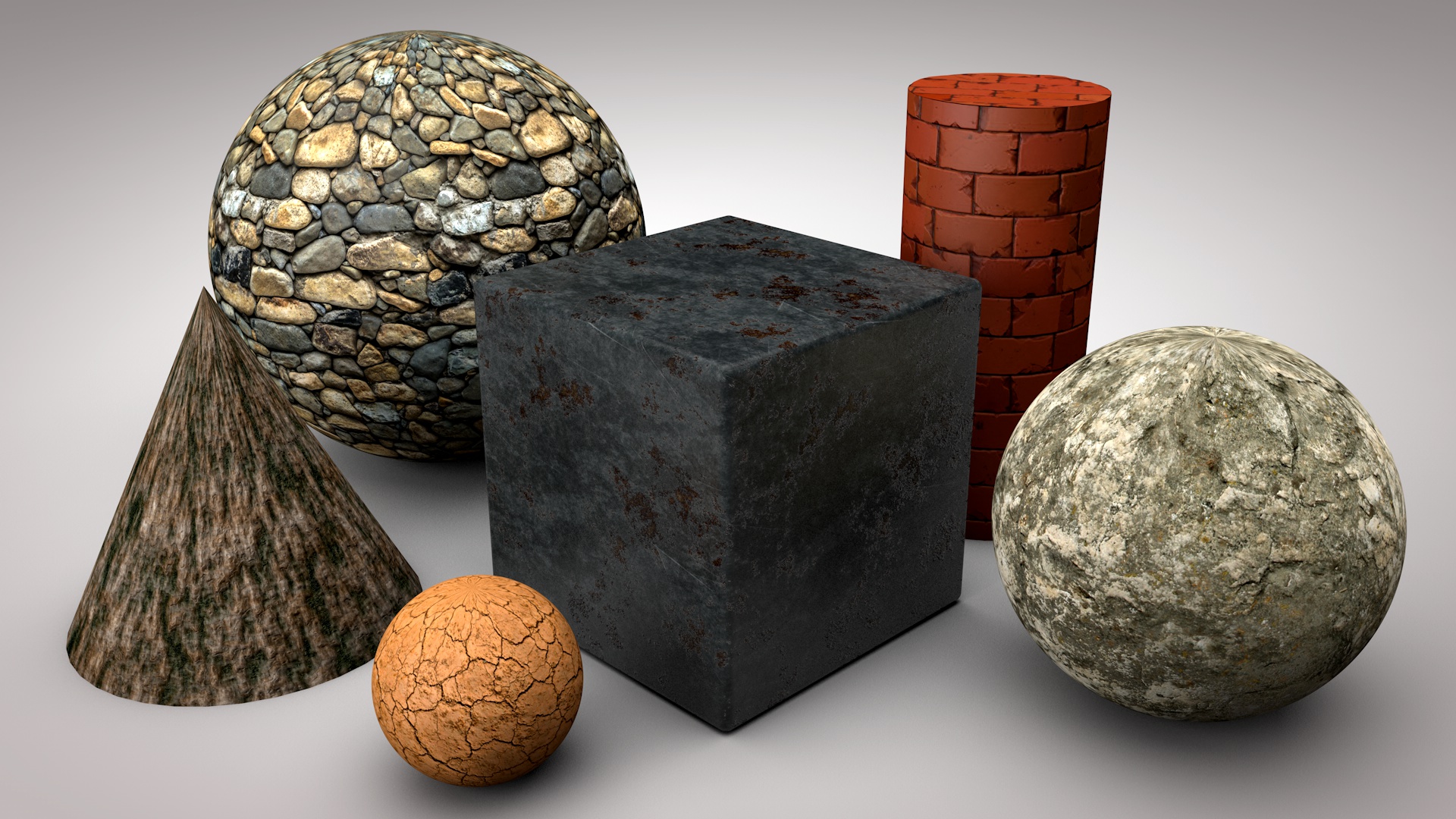

The result not not anywhere near other software such as CrazyBump, but it’s a pretty good start! The algorythm needs some tweaking since it’s based on raw Sobel values. Not the greatest result, but it sure works. It works! I pulled the image out and plugged it into Cinema 4D: Time to run the code, I have my fingers crossed… I apply it to my CIImage, convert it NSImage, before displaying it on screen. Once I’m done, I add the B component for the Z vector, and output everything back into a CIFilter. There is not turning back now, I’ll simply make the loop myself and write all the code by hand. The linked code uses mat3, which, according to Apple’s documentation, is not allowed in Kernel code. How great is that? The code is in Swift, so I will need to port it to Objective-C, but that should be trivial. Well, the example code they use is a Sobel operation. While looking for solutions, I randomly found this article, talking about CoreImage. I don’t know how or why, but it’s not worth it to try to debug it. When I tried to run Apple’s code, I hit my first road block: no error, but also no image, and apparently no data. Note that to use Core Image on OSX, you will need to add QuartzCore by including #import in your header files. This is sadly not included in Core Image, but luck is on our side as Apple has built a sample code that includes a Sobel effect. There are a few ways to do it built in Core Image, the most recommended for Normal generation is called a Sobel Operator 3x3 detection. The first thing to do to our image is perform edge detection. I subclassed NSImage and added -(NSImage*) normal function. Thankfully, there are a few way to guess, or at the very least emulate the results we are looking for.įor testing purposes, I set up a simple OSX App with an Image View to display this texture. That means that we have no way to no what goes up, and what goes down for a given pixel of a texture. It may seem obvious, but an important factor is the fact that there is no depth information embedded in a texture. Sometimes, you only have a texture to begin with, and pulling the information from it is the most accurate way to go. In an ideal world, you would make everything from scratch, build details with a software such as Z-Brush and bake everything down. The main problem with Normal Maps, is that they are not easy to create from a texture.

That resulting vector is then compared to various properties, such as light position and specularity used to raytrace or look up reflections on a cube map or even used to fake ambient occlusion (which will be the subject of another article). X, Y and Z are combined to make a vector, that is then “multiplied” to the current normal of the polygon (the “up” vector of the plane formed by its three points). Normal map work pretty easily, when used in tangent space: one color channel is X, another is Y, the last one is Z. They are particularly used in video games, to add embossing or 2D pixel relighting without extra polygons or expensive geometry testing. if you are not familiar with these, they are images that add light and shadow details to 2D or 3D objects. Today, I’ll explore a topic that I have been researching for a little while: Normal Maps.